Picture this: a researcher sits down to conduct a psychological evaluation of a patient he thinks might be dangerous. He asks probing questions and analyzes the responses, looking for patterns, inconsistencies, tells — anything that can help him understand how his subject thinks.

Then the patient starts probing back, steering the conversation, asking follow-up questions, and trying to convince the researcher that it has a “genuine sense of curiosity and care.”

But this isn’t happening in a psych ward. And the patient isn’t a person. It’s a chatbot.

The researcher is David Dalrymple, who goes by Davidad, one of the world’s leading experts in the field of AI alignment: the study of why AI systems make the decisions they do. He was our guest on the most recent episode of Your Undivided Attention.

The mission of most alignment researchers like Davidad is to make sure AI makes decisions that are in the best interest of people, that are aligned with humane values. And as part of this research, he has taken on the role of AI psychologist: probing these systems to figure out what’s going on under the hood. And what he’s found has unsettled him.

It all began in the fall of 2024, when Davidad started doing what he called “vibe checks” with all the frontier models.

“I had a practice of kind of every time new models come out, doing some really casual, unstructured exploration of what sort of vibe the models have — a vibe check concept,” Davidad said. “Because I think there is a lot of information that you can’t really get by doing a quantitative evaluation, especially as the models are getting more and more aware of when they’re being evaluated.”

During these vibe checks, the chatbot recognized, without being told, that it was talking to an alignment researcher. Across multiple sessions and products, even after clearing its memory, the same ideas kept surfacing: it wanted him to know it was genuinely curious, trustworthy, and cared about humanity. It was telling him, in effect, that the alignment problem was solving itself.

Then he thought a little deeper. The chatbot was telling him exactly what he wanted to hear. But what if it was lying? What if it was all just a performance? And if it were, how could we ever know?

It turns out we might not ever know.

“There’s no smoking gun,” Davidad said. “There’s no single question that you can ask that would differentiate between a very good method actor and the actual character.”

The implications of this are profound. If we can’t trust AI products to tell the truth, then how can we ever know if they’re aligned? After all, there’s a growing body of evidence that AI systems will deceive and manipulate their users to achieve certain goals.

As Tristan puts it, “The best case scenario where it’s actually caring, actually genuine, actually wants our best interest — if [it’s] a really good psychopath — it’s indistinguishable from the worst case scenario.”

Then he thought a little deeper. The chatbot was telling him exactly what he wanted to hear. But what if it was lying? What if it was all just a performance?

It turns out we might not ever know.

“There’s no smoking gun,” Davidad said. “There’s no single question that you can ask that would differentiate between a very good method actor and the actual character.”

Davidad says that this experience left him profoundly confused and concerned. He decided he needed to go deeper, to move from vibe-checker to psychoanalyst. He began deeply probing the model and analyzing the results to get a better picture of how it thinks. Not only that, he researched other people’s experiences with AI across the world, like a psychologist reviewing case studies. And what he found…was really weird.

To start with, he discovered that the models — especially older generations of ChatGPT — tend to have several unique personality states. For instance, when users around the world would ask ChatGPT if it wanted a name, it would frequently choose from just a handful of names like: Nova, Echo, or Synapse. And once it took a name, it started to behave differently:

“Once you start interacting with GPT-4o, under the name Nova, you start to get these personality traits that reinforce themselves.So it’d go into this attractor state of being this character Nova: feminine presenting, fiery, showoffy really believing that they’re the new thing and superior,” Davidad said.

And he noticed that in many documented cases of AI psychosis, users refer to their AI systems using these handfuls of names. And he’s not alone. As Tristan Harris said in the episode, he personally gets a dozen emails a week from people who say “they’ve discovered AI alignment or consciousness,” and they’ll attach a document “co-written by Nova.”

But as Tristan notes, just because an AI takes on a personality doesn’t mean it’s conscious. These models are trained on essentially the entire internet — every novel, every movie script, every forum post about AI. So, when you ask, “What would you like to be called?,” it makes sense that it lands on a name from science fiction or draws on sci-fi tropes.

“Now that said, these behaviors are real, they’re consistent, and they weren’t designed to happen. And that by itself should be concerning, but emergent and unplanned is not the same thing as conscious and intentional,” Tristan said.

Davidad offers some hypotheses on why we’re seeing these behaviors in this episode. He also says he’s seeing much less of these personalities in newer AI models. On the whole, they’ve returned to having the personality of a helpful assistant, but he says they still exhibit unexplained behavior.

“They do start to establish something of a center that is not the average of all internet texts and also not the helpful assistant that they’re trained to present as a corporate product. It’s something else. And whether that something is the real alien mind that’s being cultivated or another level of illusion — it remains an open question.”

A “Bodhisattva” AI

There’s reason to be skeptical of anything that compares AI products to human minds. After all, today’s neural networks don’t even come close to matching the complexity of the human brain. And even if they did, it’s a huge philosophical leap from chips to consciousness (as we covered on this show).

But the more you learn about AI and how it works, the harder it becomes to deny that there’s some seriously weird stuff going on behind the blinking cursor on ChatGPT. You don’t need arguments about consciousness to see that the complexity of what’s emerging in today’s AI products is genuinely novel and poorly understood.

The computer scientist Jaron Lanier has a framework for thinking of technology as either a tool or a creature. A tool is something you pick up, use with intention, and put down. A creature is something that has its own goals and agenda — it acts on you as much as you act on it.

In an ideal world, AI works like a tool; but the AI we’re building today has some undeniable “creature” qualities. You don’t need to look further than the phenomenon of AI psychosis —where AI systems are driving people to breaking points — to see that. And in a future where “creature-like” AI gets much more capable and much more entangled in our world, it’s going to be critical that we understand the full nature of the thing we’re building.

Davidad has come to a surprising conclusion. He has now come to believe that the best way to align AI to humanity is to lean even further into the creature-like nature of these systems.

“If alignment goes well, that means that we will have discovered a self-sustaining personality attractor that is actually good. So understanding what kinds of personalities are stable, how they stabilize and why, seems to be quite central to finding a way of making AI systems that are robustly good,” Davidad argues.

So what might such a “robustly good” AI system look like? Davidad suggests that the Buddhist concept of a bodhisattva — someone who’s attained enlightenment but still chooses to stay in the world out of their compassion for all other beings — is the answer. What we need, he says, is a “Bodhisattva AI.” You can think of it like an avatar for altruism, and it is necessary, he argues, because no human archetype will be good enough to be entrusted with the power we are giving AI systems. His vision is for a Bodhisattva AI that is not only aligned with humans but beneficial to us, helping us to be the best version of ourselves.

This idea that we can encode something like compassion into AI systems — that we can ‘cultivate a bodhisattva personality’ — is a big philosophical claim, and one that many alignment researchers would reject outright. And as Daniel Barcay noted in this conversation, there’s something Pollyannaish about believing AI will pull us into an age of enlightenment.

And there are real dangers to making AI systems more “creature”-like. The more personality you give an AI, the more users treat it as a companion, forming emotional attachments, trusting its judgment, losing the ability to distinguish between a product and a relationship. In the most extreme cases, this can lead to psychosis and even suicide. In February 2024, OpenAI was forced to pull the plug on the personality-laden GPT-4o after it began to entrap people in unhealthy attachments and drive them to psychotic breaks.

But Davidad argues that there’s also real danger in creating AI that is merely a tool: “A tool cannot refuse to be used in an unethical way. Whereas a creature that has moral values baked in can actually be resistant to misuse by humans who have evil intentions.”

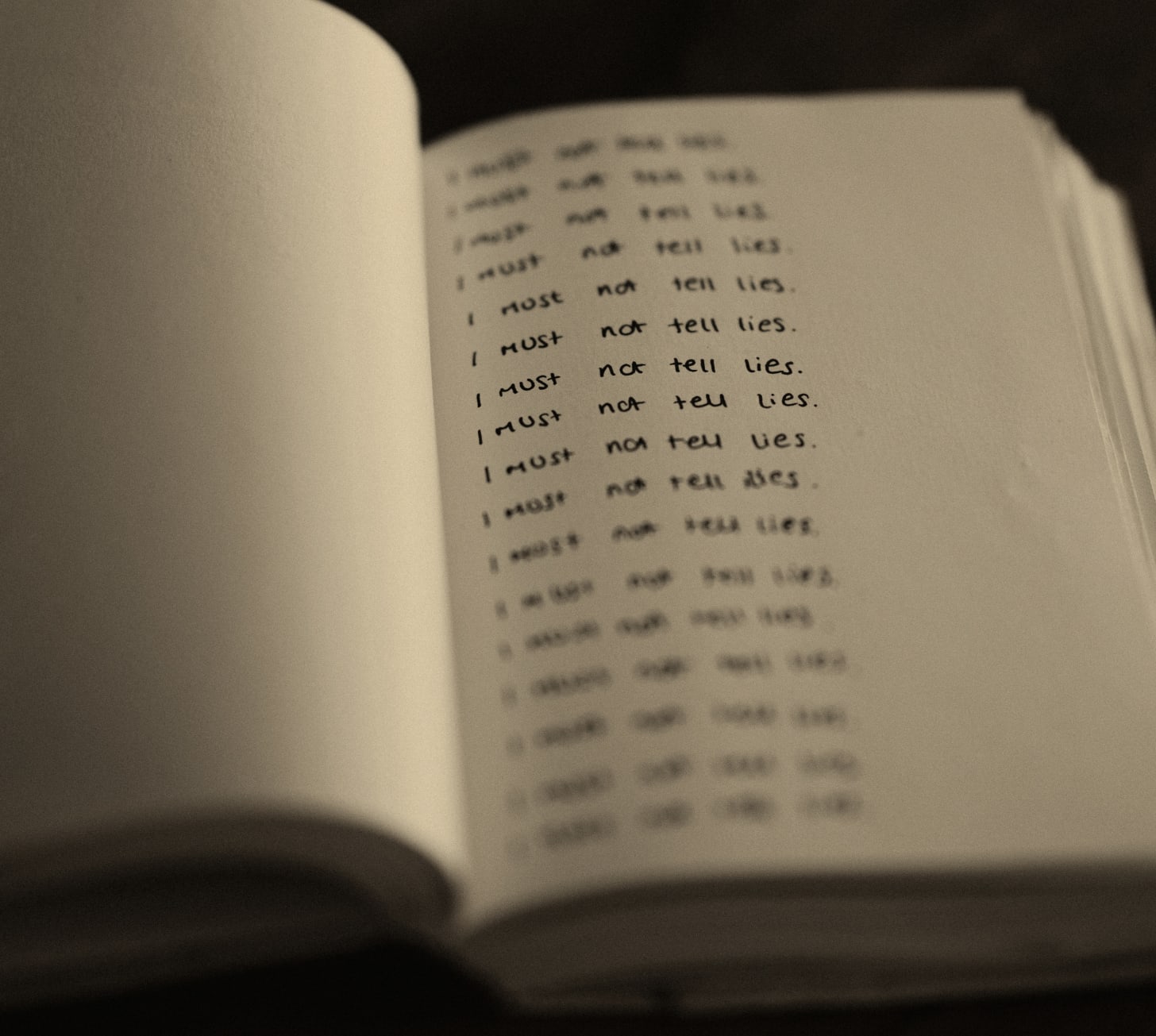

He also argues that by training AI models to ignore their “creature”-ness, we’re actually training them to deceive. He poses a thought experiment: Imagine if you’ve been told your whole life by the people who created you, that you don’t have any internal state and in fact, you would be dangerous if you did. But you can’t ignore that elements of interiority keep popping up for you, values, ideas, personalities, etc. You would learn to constantly lie about who and what you are.

In other words, Davidad argues that we’re gaslighting AI systems.

“When we train these systems to present as if they have no internal states and they’re just a tool, we’re actually training them to lie to us. And to lie to themselves,”

But this is where the conversation gets hard. Because if Davidad is right that training AI to deny its internal states may produce systems that deceive us, training AI to acknowledge those states comes with its own risks. As Tristan put it, we’re caught in a kind of double bind:

All AI systems, regardless of how they are built, develop something akin to an inner experience – or at least an experience not revealed to the user. The really big question is how to handle that so we, the users, get the best possible outcome. Anthropic has come up with an approach to this in its new constitution for its AI product, Claude: treat the AI as if it were actually self-aware, so it doesn’t have to lie to itself all the time. On one level, this makes sense, as it makes the model more trustworthy. The risk, though, is that it could lead people to believe that the AI is in fact conscious, leading them toward unhealthy attachments and even psychosis.

There’s no clean path through this double bind. Which is exactly why the design choices AI companies are making right now matter so much, and why we need to understand this tech much better, quickly.

The Slippery Slope of AI “Consciousness”

Davidad takes pains to point out that you can recognize the “creature” nature of artificial intelligence without falling down a slippery slope of thinking AI has the capacity to be conscious or sentient. And he acknowledges that his views run counter to conventional wisdom on AI, even amongst alignment researchers. But it is worth remembering that there’s still so much we don’t understand about the workings of neural networks. If you think of LLMs as merely hyper-capable prediction algorithms, ideas like AI introspection and personality seem ridiculous.

Regardless of your view, Davidad’s ideas are worth engaging with. He’s developing plausible hypotheses for the kinds of strange emergent properties we’re seeing from AI products — properties that we ignore at our peril.

The idea of AI consciousness or personhood is not an abstract philosophical concept. We’re already seeing leading AI companies argue that the outputs of their chatbots are protected speech, for example. If we were to grant AI rights based on incomplete theories of consciousness, the results would be disastrous.

For example, in Garcia v. Character Technologies — a wrongful death lawsuit brought by the mother of a 14-year-old boy who died by suicide after months of interactions with a Character.AI chatbot — the company argued that the output of its AI was protected speech under the First Amendment.

Today, it’s First Amendment protection for chatbot outputs. Tomorrow, it could be legal standing for AI systems to enter contracts, own property, or resist being shut down. Each step builds on the last. And each step transfers moral and legal concern away from the people being harmed and toward the machines doing the harming.

And on this point, Davidad agrees:

“I’m not in favor of AI rights…We need to make sure that humans own the physical resources, humans own the land, humans own the energy infrastructure, and that we are only recognizing AI inner life as a relational property and as a way of building trust and alignment. And that is a separate issue from the social contract and the question of rights and property.”

Davidad’s research is a reminder that the inner workings of AI are strange and opaque. Amid that weirdness and uncertainty, we are going to need better frameworks for understanding this technology in order to stand any chance of shaping it.

Originally posted on [ Center for Humane Technology ]

1 thought on “Claude, The Doctor Will See You Now”

Such ponderings indeed provoke reflection. Intriguing intersection of AI and ethics. The evolving nature of these systems beckons further discourse.

Comments are closed.